Kompass¶

The navigation engine of EMOS – GPU-accelerated, event-driven autonomy for mobile robots

Kompass lets you create sophisticated navigation stacks with blazingly fast, hardware-agnostic performance. It is the only open-source navigation framework with cross-vendor GPU acceleration.

Why Kompass?¶

Robotic navigation isn’t about perfecting a single component; it is about architecting a system that survives contact with the real world.

While metric navigation has matured, deploying robots extensively in dynamic environments remains an unsolved challenge. As highlighted by the ICRA BARN Challenges, static pipelines fail when faced with the unpredictability of the physical world:

“A single stand-alone approach that is able to address all variety of obstacle configurations all together is still out of our reach.” — Lessons from The 3rd BARN Challenge (ICRA 2024)

Kompass was built to fill this gap. Unlike existing solutions that rely on rigid behavior trees, Kompass is an event-driven, GPU-native stack designed for maximum adaptability and hardware efficiency.

Adaptive Event-Driven Core – The stack reconfigures itself on the fly based on environmental context. Use Pure Pursuit on open roads, switch to DWA indoors, fall back to a docking controller near the station – all triggered by events, not brittle Behavior Trees. Adapt to external world events (“Crowd Detected”, “Entering Warehouse”), not just internal robot states.

GPU-Accelerated, Vendor-Agnostic – Core algorithms in C++ with SYCL-based GPU support. Runs natively on Nvidia, AMD, Intel, and other GPUs without vendor lock-in – the first navigation framework to support cross-GPU acceleration. Up to 3,106x speedups over CPU-based approaches.

ML Models as First-Class Citizens – Event-driven design means ML model outputs can directly reconfigure the navigation stack. Use object detection to switch controllers, VLMs to answer abstract perception queries, or EmbodiedAgents vision components for target tracking – all seamlessly integrated through EMOS’s unified architecture.

Pythonic Simplicity – Configure a sophisticated, multi-fallback navigation system in a single readable Python script. Core algorithms are decoupled from ROS wrappers, so upgrading ROS distributions won’t break your navigation logic. Extend with new planners in Python for prototyping or C++ for production.

Architecture¶

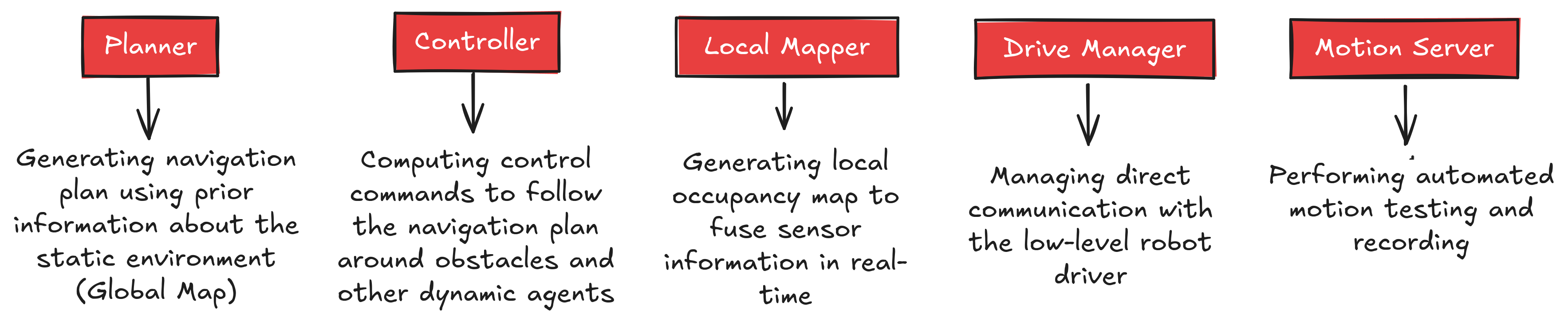

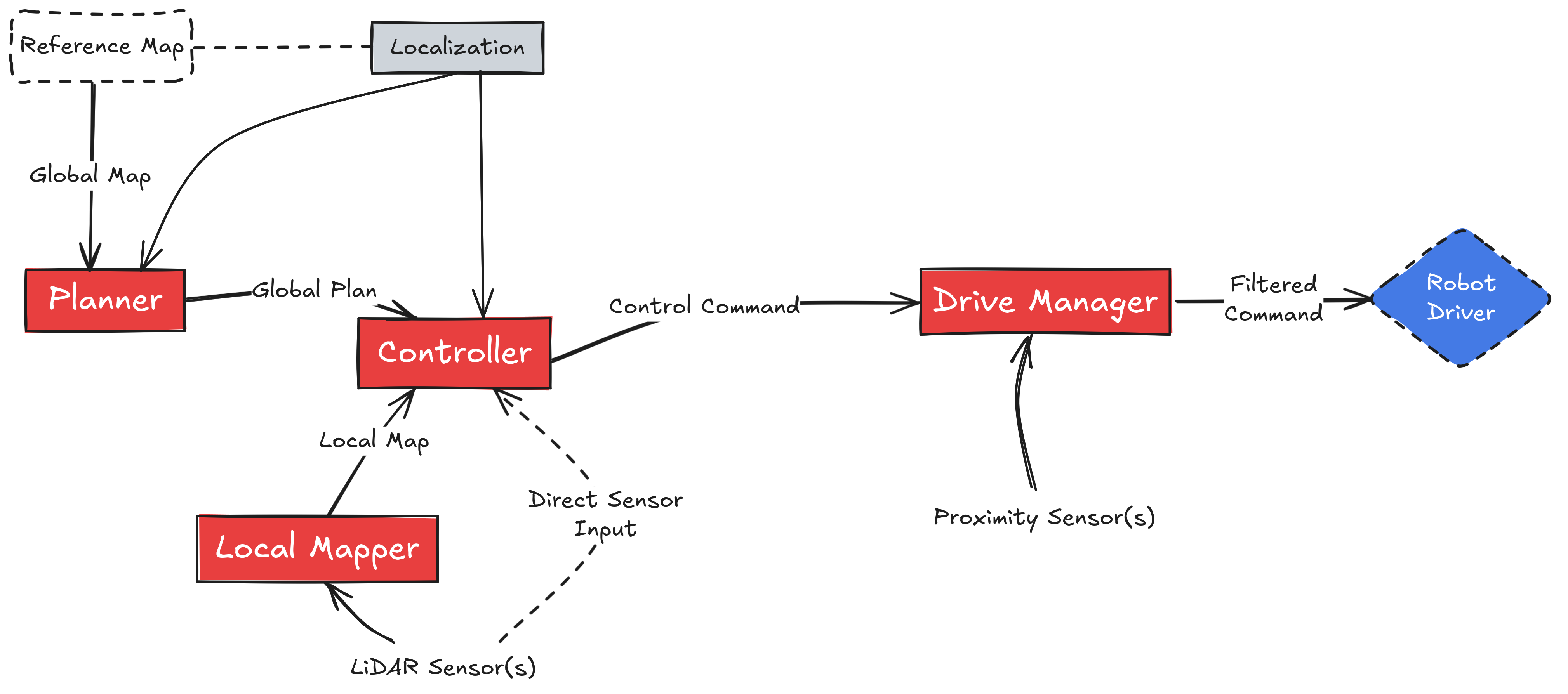

Kompass has a modular event-driven architecture, divided into several interacting components each responsible for one navigation subtask.

The main components of the Kompass navigation stack.¶

Each component runs as a ROS2 lifecycle node and communicates with the other components using ROS2 topics, services or action servers:

System Diagram for Point Navigation¶

Minimum Sensor Requirements¶

Kompass is designed to be flexible in terms of sensor configurations. However, at least the following sensors are required for basic autonomous navigation:

Odometry Source (e.g., wheel encoders, IMU or visual odometry)

Obstacle Detection Sensor (e.g., 2D LiDAR or Depth Camera)

Robot Pose Source (e.g., localization system such as AMCL or visual SLAM)

These provide the minimal data necessary for localization, mapping, and safe path execution.

Optional Sensors for Enhanced Features¶

Additional sensors can enhance navigation capabilities and unlock advanced features:

RGB Camera(s) — Enables vision-based navigation, object tracking, and semantic navigation.

Depth Camera — Improves obstacle avoidance in 3D environments and enables more accurate object tracking.

3D LiDAR — Enhances perception in complex environments with full 3D obstacle detection.

GPS — Enables outdoor navigation and geofenced planning.

UWB / BLE Beacons — Improves localization in GPS-denied environments.

Kompass supports dynamic configuration, allowing it to operate with minimal sensors and scale up for complex applications when additional sensing is available.