Why EMOS¶

The robotics industry is undergoing a structural shift. Robots are transitioning from single-purpose tools – hard-coded for fixed tasks – to general-purpose platforms that must perform different jobs in different environments. While the AI industry races to build foundation models, a critical vacuum remains in the infrastructure required to actually ground these models on robots usable in the field.

EMOS fills that vacuum. It is the missing orchestration layer between capable hardware and capable AI.

The Problem¶

Modern robot hardware ships with stable locomotion controllers and basic SDKs, but little else. Getting a robot to actually do something useful – navigate a cluttered warehouse, respond to voice commands, recover from failures – requires stitching together a fragile patchwork of ROS packages, custom launch files, and one-off scripts. Every new deployment becomes a bespoke R&D project.

This approach has three fatal flaws:

It doesn’t scale. Every new robot, environment, or task requires months of custom engineering.

It doesn’t adapt. Rigid state machines and declarative graphs cannot handle the chaos of the real world – sensor failures, dynamic obstacles, network drops.

It doesn’t transfer. Software written for one robot rarely works on another, even if the task is identical.

What EMOS Changes¶

From Custom Projects to Universal Recipes¶

EMOS replaces brittle, robot-specific software projects with Recipes: reusable, hardware-agnostic application packages written in pure Python. A Recipe is a complete agentic workflow – perception, reasoning, navigation, memory, and interaction – defined in a single script and launched with one command.

One Robot, Many Tasks: The same robot can run different Recipes for different jobs – inspection in the morning, delivery at noon, security patrol at night.

One Recipe, Many Robots: A Recipe written for a wheeled AMR runs identically on a quadruped. EMOS handles the kinematic translation beneath the surface.

From Rigid Graphs to Adaptive Agents¶

Legacy stacks treat failure as a system crash. EMOS treats it as a control flow state. Its event-driven architecture lets robots reconfigure themselves at runtime:

Hot-swap ML models when the network drops

Switch navigation algorithms when the robot gets stuck

Trigger recovery maneuvers based on sensor events

Compose complex behaviors with logic gates (AND, OR, NOT) across multiple data streams

This isn’t bolted-on error handling – adaptivity is a first-class primitive in the system design.

From Stateless Tools to Embodied Agents¶

Current robots have logs, not memory. They record data for post-facto analysis but cannot recall it at runtime. EMOS introduces embodiment primitives that give robots a sense of self and history:

Spatio-Temporal Semantic Memory: A queryable world-state backed by vector databases that persists across tasks.

Self-Referential State: Components can inspect and modify each other’s configuration, enabling system-level awareness rather than isolated self-repair.

From Separate Backends to Auto-Generated Interaction¶

In traditional robotics, the automation logic is “backend” and the user interface is a separate custom project. EMOS treats the Recipe as the single source of truth – defining the logic automatically generates a bespoke Web UI for real-time monitoring, configuration, and control. No separate frontend development required.

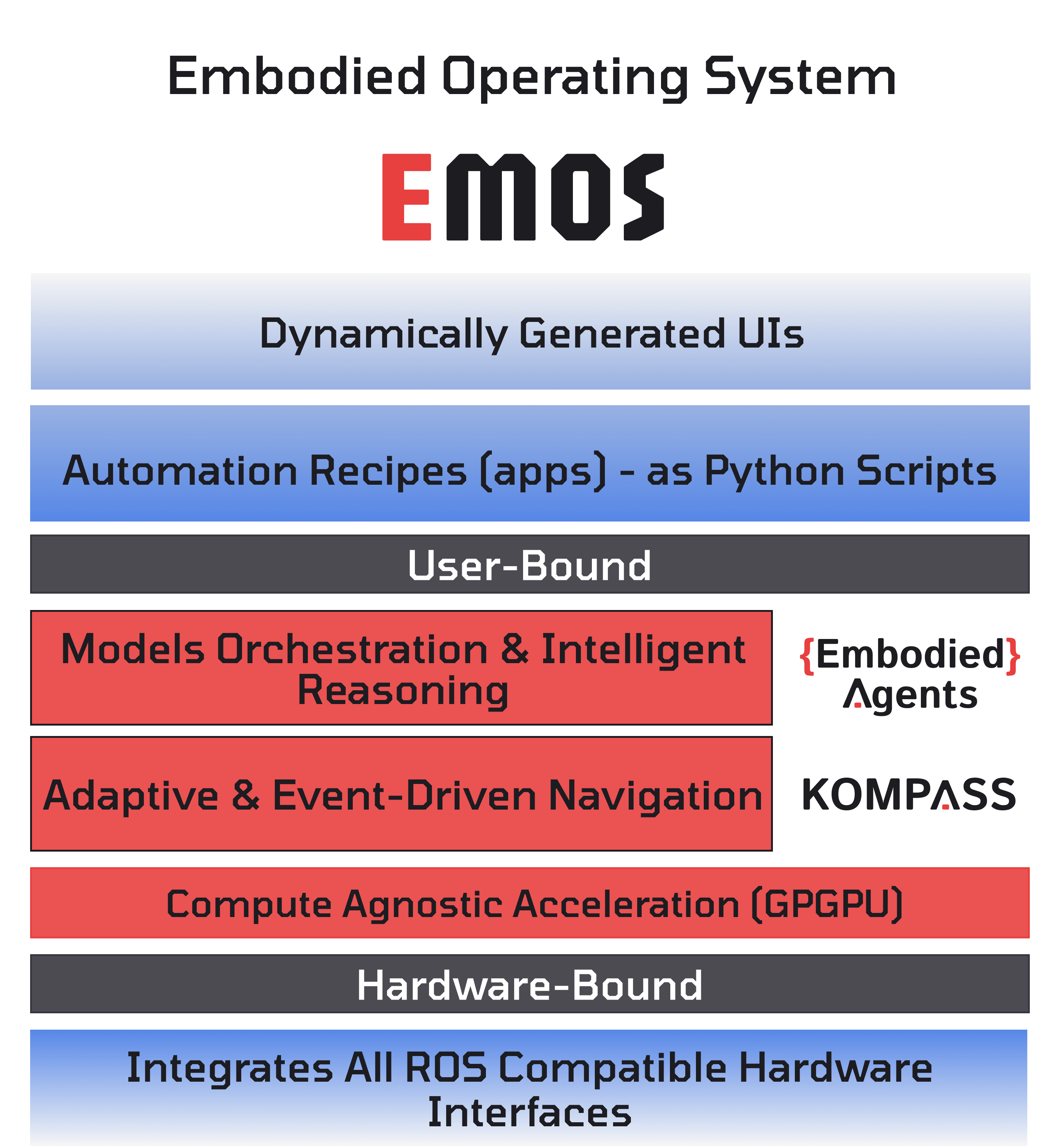

The Architecture¶

EMOS is built on three open-source components that work in tandem:

Component |

Layer |

What It Does |

|---|---|---|

Intelligence |

Agentic graphs of ML models with semantic memory, information routing, and adaptive reconfiguration |

|

Navigation |

GPU-powered planning and control for real-world mobility across all motion models |

|

Architecture |

Event-driven system primitives, lifecycle management, and the imperative launch API that underpins both layers |

Together, they provide a complete runtime: from raw sensor data to intelligent action, with adaptivity and resilience built in at every level.

Who Is EMOS For¶

1. Robot Managers & End-Users¶

Use pre-built Recipes or write your own with the high-level Python API. Focus on your business logic – EMOS handles the robotics complexity.

2. Integrators & Solution Providers¶

EMOS is your SDK for the physical world. Connect robot events to ERPs, building management systems, or fleet software using the event-action architecture. Spend your time on enterprise integration, not low-level robotics plumbing.

3. OEM Teams¶

Write a single Hardware Abstraction Layer plugin and instantly unlock the entire EMOS ecosystem for your chassis. Every Recipe written by any developer runs on your hardware without custom code.

EMOS is Built for the Real World¶

EMOS is not a research prototype. It is shaped by the demands of production deployments – autonomous inspection patrols, security operations, and field robotics on quadruped and wheeled platforms. Every feature in the stack exists because a real-world deployment needed it.

Get Started¶

Get up and running in minutes.

Step-by-step tutorials from simple to production-grade.