EMOS – The Embodied Operating System¶

The open-source unified orchestration layer for Physical AI.

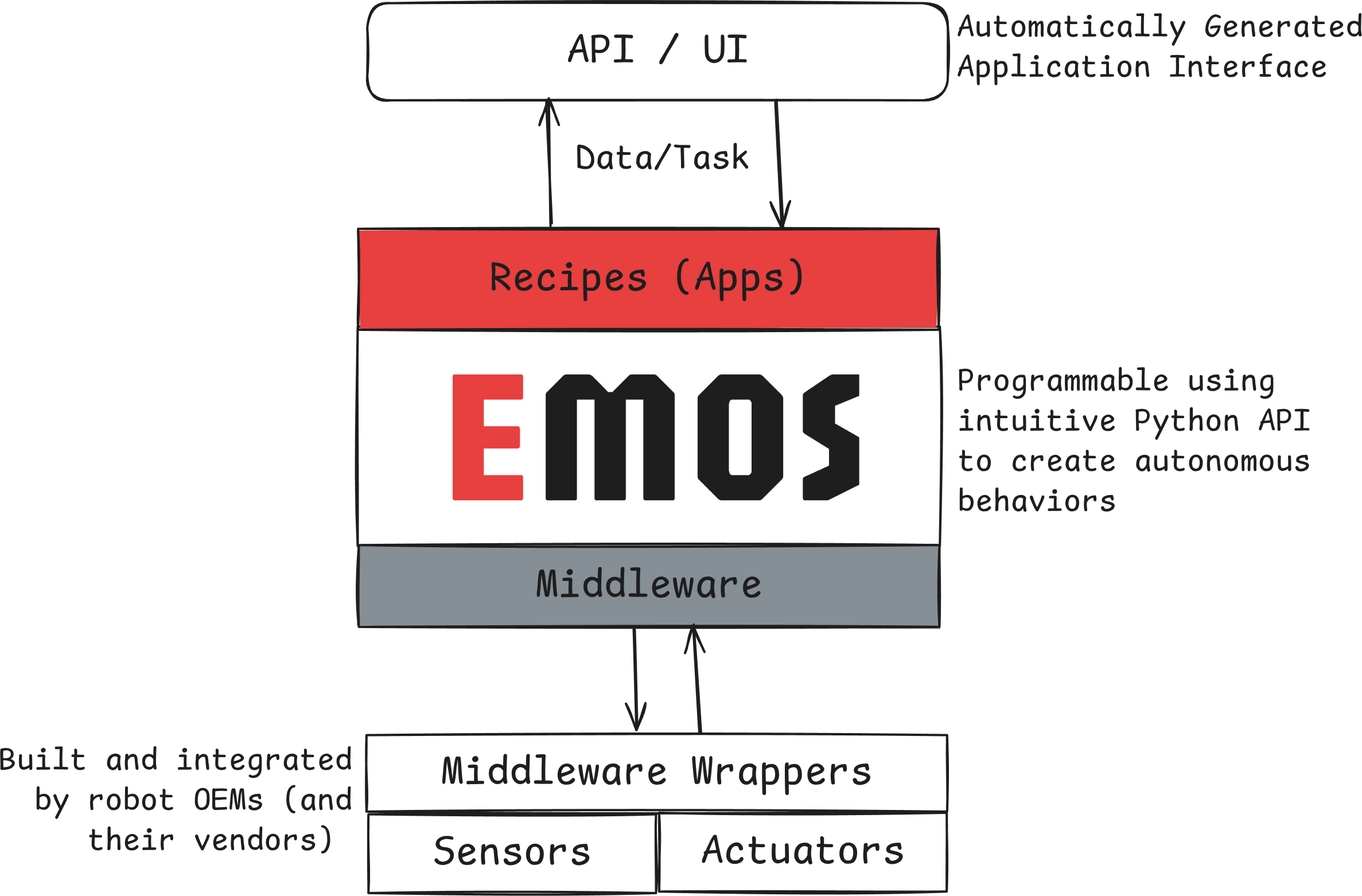

EMOS transforms robots into Physical AI Agents. It provides a hardware-agnostic runtime that lets robots see, think, move, and adapt – all orchestrated from pure Python scripts called Recipes.

Write a Recipe once, deploy it on any robot – from wheeled AMRs to humanoids – without rewriting code.

Get Started • Why EMOS? • View on GitHub

What You Can Build¶

Wire together vision, language, speech, and memory components into agentic workflows. Route queries by intent, answer questions about the environment, or build a semantic map – all from a single Python script.

GPU-accelerated planning and control for real-world mobility. Point-to-point navigation, path recording, and vision-based target following – across differential drive, Ackermann, and omnidirectional platforms.

Event-driven architecture lets agents reconfigure themselves at runtime. Hot-swap ML models on network failure, switch navigation algorithms when stuck, trigger recovery maneuvers from sensor events, or compose complex behaviors with logic gates.

Use VLMs for high-level task decomposition and VLAs for end-to-end manipulation. Closed-loop control where a VLM referee stops actions on visual task completion.

What’s Inside¶

EMOS is built on three open-source components:

Component |

Role |

|---|---|

Intelligence layer – agentic graphs of ML models with semantic memory and event-driven reconfiguration |

|

Navigation layer – GPU-powered planning and control for real-world mobility |

|

Architecture layer – event-driven system primitives and imperative launch API |

The problem EMOS solves – from custom R&D projects to universal, adaptive robot apps.

Install EMOS and run your first Recipe in minutes.

Build intelligent robot behaviors with step-by-step guides.

Understand the architecture, components, events, and fallbacks.

Package and run Recipes with the emos CLI.

Get the llms.txt for your coding agent and let it write recipes for you.